I completely missed the news that came out last July about the changes that were being made in the Selection Process for the NCAAT. When I saw articles and forum comments about the changes, I had to google around and see what I could find. Assuming you were ignoring college sports articles thru the summer like I was, here’s a brief summary on what we missed.

WHY WERE CHANGES MADE?

A committee from the National Association of Basketball Coaches (NABC) asked that the difficulty in winning on the road be reflected on the team sheets used during the selection and seeding process. This comes on top of analysts arguing for years that more emphasis needed to be put on road wins.

SO EXACTLY WHAT CHANGES ARE BEING MADE?

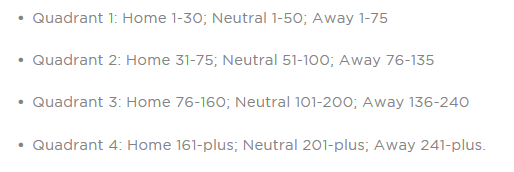

The big thing seems to be that team sheets will sort the games played into four quadrants:

Note that the NCAA link above lists all of the criteria that the Selection Committee uses.

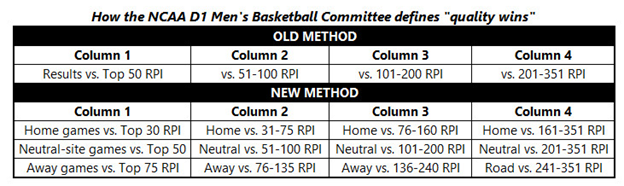

HOW DOES THIS COMPARE TO WHAT HAS BEEN USED IN THE PAST?

The old method was divided simply by RPI ranges:

HOW DID THEY COME UP THE NEW RPI RANGES?

“We consulted with experts within the coaching and analytics fields who looked at historical data, based on winning percentages by game location, to come up with these dividing lines within each of the columns,” Mark Hollis, Michigan State’s athletic director, and the outgoing committee chair, said. “The emphasis of performing well on the road is important, as was the need for teams not to be penalized as much for road losses. Beating elite competition, regardless of the game location, will still be rewarded, but the committee wanted the team sheets to reflect that a road game against a team ranked 60th is mathematically more difficult and of higher quality than a home game versus a team ranked 35th. We feel this change accomplishes that.”

WHO IS THIS CHANGE SUPPOSED TO HELP?

Mid-majors of course. Every change made that I’m aware of since a 6-10 FSU got an NCAAT bid has been made to help the mid-majors. Let’s be clear with what “mid-major” means. I don’t think that anyone wants a second team from the bottom dozen or so conferences into the NCAAT. The changes were made to help the Temples or maybe Monmouths of college basketball:

It’s a huge step for mid-majors, schools forced to play more road games than the majority of teams from conferences that annually land four or more tournament bids.

“It also puts an emphasis on losses,” an NCAA source who was in the room in Chicago told CBS Sports. “If you look at Monmouth from a couple of years ago, they were dinged for losing games against teams in the 200s on the road. Now, in the current system, those teams would be in the third column now instead of the fourth. It’s not just the wins, but where those losses — which show in up red on the team sheets — land in the columns.”

Monmouth missed the NCAA Tournament in 2015 despite owning many neutral and road wins over power-conference teams (including victories over Notre Dame and USC). Under these guidelines, it’s the Hawks may have landed an at-large bid.

“I think that’s good,” Monmouth coach King Rice told NCAA.com. “We should have gotten into the tournament that year. People still come up to me to this day and tell me that. We had one home game in our first 11 pre-conference games that year. We beat teams that we thought were going to be pretty good.”

Monmouth had a win over USC (51) on a neutral court and wins over UCLA (102), Siena (104) and Georgetown (106) in true road games. In the old system, the USC win would have been in the second category on the team sheet the selection committee studies and the other three would have been in the third category of 100 and above. But now they all slide down on the team sheet, to make the resume look more attractive.

VaWolf82 translation:

We didn’t really beat anyone that was any good. But we tried and that should have meant something!!!

SO WILL THE NEW SYSTEM REALLY HELP MID-MAJORS?

Let’s backup and discuss the last time that the RPI formula was changed by adjusting the winning percentages to give more credit for road wins and a bigger hit for home losses. The net effect was to elevate teams at the top of mid-major schools and lower the RPI for teams in the middle of the power conferences. At first glance, you would think that this change would help more mid-majors get at-large bids. But the professors behind The Dance Card found that getting an at-large bid better correlated with the old RPI formula. Why was that?

My theory is that a slightly higher RPI alone won’t increase your chances of getting an at-large bid. You need quality wins to earn spot in the NCAAT. The new RPI formula devalues the win by a home underdog in a power conference…but the Selection Committee usually rewarded these upsets. They have always valued road/neutral wins, but not against the dregs of college basketball. So most of the mid-major road wins that elevated their RPI bought them nothing from the Selection Committee.

So will this change be any different?

Well if the people that were so busy touting Monmouth had actually done their job, we would know. You can’t just look at the changes that would have happened to one team’s resume and draw any conclusions. The Selection Committee ranks every team from 1-68. If they are going to describe the changes to Monmouth, then they should also have done the same thing to the resumes of the last four teams to get at-large bids. Then look at all five resumes and see if Monmouth actually looked better than the lowest at-large bids. (PS…Monmouth’s resume was so bland, I didn’t mention them other to make a snide remark in my post-Selection blog post that year.)

My guess is that the changes could help in certain situations. I don’t have any exact numbers but I suspect that even with the new changes, teams from the middle of power conferences will still have more opportunities to get Quadrant 1 wins than most mid-majors. If a team is any good…more opportunities will translate into more big wins and ultimately more bids.

Now that we know the Dance Card is being updated this year, we can get some clues about how much the new quadrants change the selection process when we compare the actual bids to the Dance Card’s predictions. We probably won’t know anything definitive until there is enough data with the new system for the Dance Card professors to analyze the at-large bids being given out with the new system.

In any event, I would be willing to bet that at least one mid-major will be touted as an example of where the new system helped earn them an at-large bid. We’ll have to take a look at any such examples to see whether or not the new system actually helped or not.

DOES THE SELECTION COMMITTEE USE ANY COMPUTER METRIC BESIDES RPI?

Yes, but with the following caveat:

Each of the 10 committee members uses these various resources to form his or her own opinion, resulting in the committee’s consensus position on selection and seeding.

So other metrics are available to the Selection Committee, but it doesn’t appear that there are any set rules on how to use them. But we definitely know that the RPI is used by the committee because:

- They specifically say so in the above link.

- The professors behind the Dance Card have proven it by statistical analysis.

EXACTLY WHICH NEW METRICS ARE PRESENTED TO THE SELECTION COMMITTEE?

…For the first time, committee members will see rankings from ESPN’s BPI and Strength of Record, KPI, Jeff Sagarin Ratings and KenPom.com next to the RPI on the official team sheets used to assess each program…

The tools all vary in how they assess a team. The RPI, KPI and ESPN’s Strength of Record aim to capture the quality of a team based on its current résumé. And ESPN’s BPI, Sagarin and KenPom.com ratings attempt to predict how a team will perform in the future…

Note that I strongly disagree with using metrics that attempt to predict the future to fill or seed the NCAAT. Both at-large bids and seeds should be earned, not a product of predictive algorithms. Predictive algorithms need to stay in the domain of geeks and gamblers.

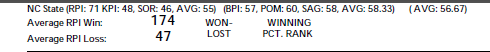

Here’s a snip from State’s team sheet from the NCAA on Sunday after the UNC loss (upper left corner of team sheet):

Warning: The above link goes to a PDF that has one page for all of the 351 teams in Division 1. Even worse, it doesn’t appear that the pdf is searchable. The NCAA also publishes a “nitty-gritty” report with one summary line for each team…not searchable either. Surprisingly, the NCAA has been publishing these each morning for the last several weeks. Just make sure that you don’t click the women’s basketball links by mistake.

I don’t have any clue about the KPI other than it was created by Kevin Pauga. There’s always Google if you want to learn more.

HOW MANY QUADRANT 2 WINS ARE REQUIRED TO EQUAL ONE QUADRANT 1 WIN?

HOW BAD DO QUADRANT 3 OR 4 LOSSES HURT?

After seeing the definitions of the new quadrants, these are obviously two of the most important questions. Unfortunately, I haven’t found anything that comes close to answering either question. But before we start speculating on the future, let’s back up and look at what we know about the past.

I generally make an effort to link interviews with the Selection Committee to hopefully get more information about the inner workings. The problem with this approach is that sometimes you get information that is lacking proper context. For instance, when the selection committee says that someone got in because of their road wins…was that a general criterion or just the area where that particular team stood out from the others competing for the last few bids? Conversely, when the Selection Committee said that SYR was left out last year because they didn’t have good road wins, was that the only reason or was it just more palatable than saying that their RPI sucked?

So if we mostly ignore what people say and look at what they do, we should be able to use the inputs from the Dance Card to illuminate the selection process used before this season. From my entry last year on the Dance Card, we know that the following things can be used to project at-large bids:

– Overall RPI rank (using RPI formula in use prior to 2005)

– No. of conference losses below 0.500 record

– Wins vs. teams ranked 1–25 in RPI

– Wins vs. teams ranked 26–50 in RPI

– No. of road wins

– No. of wins above 0.500 record against teams ranked 26–50 in RPI

– No. of wins above 0.500 record against teams ranked 51–100 in RPI

I put them in this order because that’s the way they were listed in a paper published by the professors. One would think that they are listed in order of their weight with the most important being at the top. In any event, we can look at those factors associated with the quadrants and make some semi-educated guesses.

In the old system, all wins against the RPI Top 50 were classified as good wins. But when the professors dug a little deeper, not all Column 1 wins were treated the same. Specifically, the good wins actually contributed three different ways:

– Wins vs. teams ranked 1–25 in RPI

– Wins vs. teams ranked 26–50 in RPI

– No. of wins above 0.500 record against teams ranked 26–50 in RPI

So it seems reasonable to conclude that the old Column 1 games were mentally split into “good” wins and “really good” wins by the Selection Committee. You could make snide comments about this, but I choose to believe that this shows how much time the Selection Committee spent studying the candidate teams. So one of many questions that come up is will the new Quadrant 1 games be mentally split as well? How much “mental” weight will a road win against a Top 75 team carry when compared with other Quadrant 1 wins?

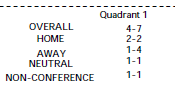

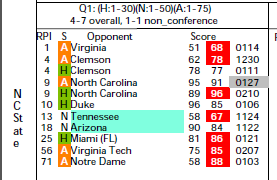

Obviously we don’t have any answers, but here is an example from State’s team sheet on how the Quadrant 1 games are presented to the committee.

The color coding is defined at the bottom of the team sheet…but from my viewpoint, the whole layout is ugly enough that it would make my eyes bleed to stare at it for very long. Whoever decided to lump the wins and losses together by sorting based only on opponents’ RPI is an idiot. The games in each quadrant should be separated into wins and losses and both then sorted by RPI. My arrangement would make it much easier to compare one team with another when the committee starts working towards the end of the Bubble.

But if you can past the horrible presentation, it is not that hard to pick out the RPI ranking of the Q1 wins. So it would not be that difficult to mentally attach extra weight to a team with a Top25 win versus a team with a Top75 win on the road. On the other hand, it is not hard to imagine a scenario where the committee bends over backwards to treat all Q1 wins the same because of the new process. Only time will tell which scenario plays out in this year’s selection process.

For the old Column 2 games, both wins and losses count in that the professors found that wins above .500 against 51-100 showed some statistical significance in the selection process. So it seems likely that the Q2 wins will carry as much weight as they did in the past…even though it’s not obvious exactly how much weight was ever given to these second-level wins. But based on past quotes from the Selection Committee, the Q2 wins can make a difference when deciding on those last few at-large bids.

SIDE NOTE

ND needs to keep winning unless they meet State in the ACCT. As long as ND remains in the Top75, the loss will be in Q1 and the win in Q2. The FSU and L’ville games also look to be Q2 games. Right now, it seems likely that State will only end up with those three games in Q2 before the ACCT. The uncertainty on how Q2 games will be used adds a little extra importance for State to do no worse than a split with FSU/L’ville.

IS THERE ANY CHANCE OF GETTING RID OF THE RPI?

There is one anticipated change that didn’t happen in Chicago: removal of the RPI as the primary ranking source. It’s the data point that builds team sheets, and has been criticized for as outdated and manipulated by savvy schedulers. The NCAA notes in its press release that there is a “likelihood of a new metric being in place for the 2018-19 season.” The NCAA will attempt to run a composite ranking system, plus a separate, unique, independent formula next season as test run behind the scenes. Think of it like a dress rehearsal: the NCAA wants to see how a composite metric and how its individual ranking system performs vs. other established metrics before signing off on an overhaul.

“The bottom line is we recognize the need to continue using more modern metrics and the need to make those more front and center in the sorting of data for the selection and seeding process,” senior vice president of NCAA basketball Dan Gavitt said. “However, it’s also critical to have a long-term solution that is tested in real time, so we can roll something out that we have complete confidence in, is mathematically sound and is acceptable in every stakeholder’s eyes.”

The movement to introduce more modern metrics into the evaluation process was formally sparked with a meeting of analytical minds in January. The NCAA is motivated to evolve its selection process, but wants to be certain it has an accurate metric (or composite) before it downgrades the RPI.

Note that it is beyond silly to claim that you need to evaluate the various metrics this year, before making a decision on what metric(s) to use. First of all, you should almost never make a technical decision based on only one data point. Secondly, why couldn’t they look at the last five or ten years and see how the different systems perform relative to the decisions that were made then?

WILL WE GET ANY MORE DEFINITIVE EXPLANATIONS BEFORE SELECTION SUNDAY?

Maybe

In one of the best PR moves of all time, the NCAA starting hosting mock selection sessions to explain and illustrate the process for the media. While it’s obvious no one from CBS or ESPN ever attended, every article that I’ve read was highly complementary of the event. The reason I mention this is that the dates from past years mock events always seem to be in mid-February. So keep your eyes peeled for articles from this year and link them here for our collective education.